fetch_toxicity#

- skrub.datasets.fetch_toxicity(data_home=None)[source]#

Fetch the toxicity dataset (classification) available at https://github.com/skrub-data/skrub-data-files

This is a balanced binary classification use-case, where the single table consists in only two columns:

text: the text of the commentis_toxic: whether or not the comment is toxic

Size on disk: 220KB.

- Parameters:

- data_home

stror path-like, default=None The directory where to download and unzip the files.

- data_home

- Returns:

- bunch

Bunch A dictionary-like object with the following keys:

- toxicityDataFrame of shape (1000, 2)

The dataframe.

- XDataFrame of shape (1000, 1)

Features, i.e. the dataframe without the target.

- yDataFrame of shape (1000, 1)

Target labels.

- metadatadict

A dictionary containing the name, description, source and target.

- pathstr

The path to the toxicity CSV file.

- bunch

Gallery examples#

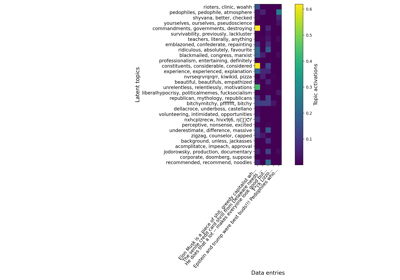

Various string encoders: a sentiment analysis example

Various string encoders: a sentiment analysis example